AI contract redlining uses large language models to suggest tracked changes in contracts, measured against your firm's negotiation standards. The technology is maturing fast, but the tools vary wildly in how they handle three things that matter: accuracy of suggestions, traceability to your playbook positions, and confidentiality of the contract text being analyzed. This guide covers the mechanics, the evaluation criteria, and the architectural choices that separate useful tools from risky ones.

In This Guide

- 01 What AI Contract Redlining Actually Means

- 02 How AI Redlining Works, Mechanically

- 03 Generic AI Suggestions vs. Playbook-Driven Redlining

- 04 What Separates Good AI Redlines from Garbage

- 05 The Confidentiality Problem Nobody Advertises

- 06 The Third-Party Paper Problem

- 07 How to Evaluate AI Redlining Tools

- 08 Where the Market Is Headed

- 09 How ContractKen Approaches Redlining

- 10 Frequently Asked Questions

Redlining is what lawyers do every day: read a contract, identify terms that deviate from acceptable positions, and propose specific language changes. It produces a marked-up document with tracked changes and explanatory comments that the counterparty can review, accept, or push back on.

AI contract redlining automates part of this process. A large language model reads the contract, compares it against a set of standards (either built-in or defined by your organization), identifies deviations, and generates specific replacement language as tracked changes. (For a broader overview of how AI-powered contract review works, see our Review product page.)

The key word is "part." The best implementations keep the lawyer in the decision-making seat. The AI proposes; the lawyer disposes. Every suggestion requires human review and acceptance before it touches the document. This is a professional responsibility requirement under ABA Model Rule 1.1 (Competence) and the practical reality that AI redlines, even good ones, require judgment calls that only a lawyer with deal context can make.

AI redlining is not the same as AI contract review. Review identifies issues. Redlining goes further: it proposes specific replacement language as tracked changes. The output is a marked-up document ready for the counterparty, not a report listing problems.

Understanding what happens under the hood helps you evaluate tools and set realistic expectations. The process has four stages:

- Stage 1: Clause Detection. The AI reads the contract and identifies individual clauses by type: limitation of liability, indemnification, termination, IP assignment, confidentiality, and so on. A single indemnification obligation might be spread across three separate paragraphs, with carve-outs buried in a definitions section and caps referenced in an exhibit. Good tools handle this. Weak ones miss the scattered pieces.

- Stage 2: Position Assessment. Each clause is compared against a reference standard. In generic tools, that standard is a built-in model of "reasonable" contract terms. In playbook-driven tools, it is your organization's defined positions for that clause type, for that contract type, in that jurisdiction.

- Stage 3: Language Generation. For clauses that deviate, the AI generates specific replacement language. The output should be actual contract language a lawyer would write, fitting the drafting style of the existing agreement, with a comment explaining the rationale.

- Stage 4: Delivery. Suggestions are presented for review. How they're delivered matters enormously for adoption.

Three Delivery Approaches Compared

| Delivery Method | How It Works | Practical Impact |

|---|---|---|

| Separate report | AI produces a document listing suggested changes. Lawyer manually applies edits. | Doubles the work. Low adoption. |

| Auto-applied tracked changes | AI inserts tracked changes directly without lawyer review. | Fast, but dangerous. Errors get buried in volume. |

| Task pane with one-click apply | Suggestions appear in a sidebar inside Microsoft Word. Lawyer reviews each one and applies it as a standard tracked change. | Lawyer sees context before applying. Counterparty cannot tell AI was involved. |

This is the most important distinction in the category, and the one most vendor marketing glosses over.

Generic AI Redlining

The tool reads the contract and suggests changes based on its training data, general market norms, or a built-in "best practices" model. The suggestions are reasonable in a vacuum. They might propose mutual limitation of liability, standard carve-outs for willful misconduct, or 30-day cure periods.

The problem: your organization may have very specific positions that differ from market norms for good reasons. Your SaaS customer agreements might accept uncapped indemnification for IP infringement because your risk profile supports it. A generic tool does not know this. It will suggest capping that indemnification, creating busywork for the lawyer who has to reject the suggestion and explain why.

Playbook-Driven Redlining

The tool compares the contract against your organization's defined negotiation positions, clause by clause, for the specific contract type under review. Each clause topic has multiple positions:

- Preferred position: Your ideal terms. For limitation of liability in a SaaS agreement: "Vendor-only cap at 2x annual fees, with uncapped carve-outs for IP infringement, indemnification, and willful misconduct."

- Acceptable position: Middle ground. Same clause: "Vendor-only cap at 1.5x annual fees, carve-outs for indemnification and willful misconduct only."

- Walkaway position: The floor below which you escalate. "Mutual cap at 1x annual fees, willful misconduct carve-out only. Below this, escalate to partner."

When the AI flags a deviation, it tells the lawyer which playbook position the clause falls below and suggests replacement language pulled from the organization's own clause library. The redline suggestion is traceable: the lawyer can see exactly which standard triggered it and why.

"The value of playbook-driven redlining is not speed. It is consistency. Twenty lawyers reviewing the same contract type will apply the same positions, every time, without drift."

A typical playbook covers 20-30 clause topics per contract type. Each topic carries three positions, guidance notes explaining the reasoning, and links to approved clause language. Playbook-driven redlining operationalizes this institutional knowledge.

| Dimension | Generic AI Redlining | Playbook-Driven Redlining |

|---|---|---|

| Standard applied | Market norms, training data | Your organization's defined positions |

| Suggestion traceability | None: "the AI thinks..." | Full: "Playbook rule 4.2, Preferred" |

| Replacement language | AI-generated | Your clause library (pre-approved) |

| Consistency | Varies by reviewer | Uniform every time |

| Junior reviewer impact | Still dependent on experience | Guided by playbook with rationale |

| Setup required | Minimal | Playbook creation (one-time) |

| Organizational IP capture | None | Encodes negotiation knowledge |

Lawyers who have used AI redlining tools know the frustration: a tool that catches obvious issues but misses the subtle ones, or generates suggestions that are technically correct but stylistically wrong. Here is what to look for.

Precision

A good redline edits exactly what needs to change and leaves everything else alone. "Surgical" is the industry term, and it matters. A tool that rewrites entire paragraphs when only a phrase needs changing creates noise. The test: take a clause where only the liability cap amount needs to change. Does the tool change just the amount, or does it rewrite the entire section?

Context Awareness

Contracts are internally cross-referenced. A limitation of liability clause might reference defined terms in Section 1, exceptions in Section 8, and an exhibit schedule. Certain clauses are regularly split across multiple sections. An indemnification obligation might have the basic commitment in one paragraph, carve-outs in another, procedural requirements in a third, and caps cross-referencing the limitation of liability section. A good tool detects all fragments, wherever they sit, and lets the user manage them as a single topic.

Commentary

Every tracked change should come with an explanatory comment. This helps the reviewing lawyer understand the rationale and provides counterparty-ready language explaining the edit. A redline without a comment is a redline the counterparty will call about, which defeats the efficiency gain.

Drafting Style Consistency

Replacement language should match the existing agreement. If the contract uses "shall" rather than "will," the redline should use "shall." If defined terms are capitalized, the redline should follow suit. Style mismatches are the fastest way for a counterparty to tell that AI was involved.

Knowing When Not to Redline

Some clauses are already at or above the acceptable position. Some deviations are minor enough that flagging them creates more friction than the risk justifies. A good tool distinguishes between "this needs a tracked change" and "this is worth noting but not worth a redline." The former gets a change. The latter gets an informational flag.

Every AI redlining tool sends your contract text to a large language model for analysis. For most tools, this means the full text of the agreement, including party names, deal terms, dollar amounts, and sensitive business terms, reaches provider servers in readable form.

This creates a legal problem that the industry has largely chosen to ignore. In February 2026, Judge Rakoff's ruling in United States v. Heppner held that documents created using consumer AI lacked privilege protection because the user had disclosed content to a third party.

Enterprise agreements improve the picture. But as we analyzed in our Heppner analysis, "no training" does not mean "never had it." Your text still reaches the provider in readable form, passes through safety classifiers, and may be retained under carve-out provisions.

Before adopting any AI redlining tool: does my contract text reach the AI provider's servers in readable form? If yes, you have a third-party disclosure to evaluate. The most defensible architecture is one where confidential text never leaves your environment.

A tool that replaces party names, dollar amounts, and identifying terms with consistent placeholders before sending text to the AI provider eliminates the privilege question entirely. The AI sees "PARTY_A shall indemnify PARTY_B for losses up to AMOUNT_1." The mapping table stays local. Speed and accuracy are necessary. Confidentiality is foundational.

Most AI redlining marketing shows the tool working on your own templates. The hard case, and the one that consumes 70%+ of in-house review time, is receiving a counterparty's draft and redlining it against your standards.

Third-party paper is harder for AI because:

- Unfamiliar structure. The counterparty's agreement uses different section numbering, defined terms, and organizational logic. The AI needs to parse this from scratch and map clauses to topics even when they are called different things.

- Implicit positions. What is missing matters as much as what is present. If the agreement contains no data protection provisions, a good tool should flag that as a missing clause against your playbook, not silently skip it.

- Cross-reference integrity. When you redline a counterparty's document, you are editing their internal cross-references. Changing a defined term in Section 2 might break references in Sections 7, 12, and Exhibit B.

- Style matching. Your redlines need to match the counterparty's drafting conventions. If they use "Vendor" and your playbook uses "Service Provider," the replacement language needs their terminology.

When evaluating tools, test them on third-party paper. Upload a counterparty's agreement you have already redlined manually and compare the AI's suggestions against your actual work product.

The market has a dozen tools claiming AI redlining capability. Here is how to separate the ones that work from the ones that demo well.

The Evaluation Checklist

Adoption is accelerating. The ACC/Everlaw GenAI Survey tracked corporate legal AI adoption jumping from 23% in 2024 to 52% in 2025. But redlining remains the least adopted use case: only 31% of in-house lawyers reported using AI for redlining, per Juro's 2025 survey. That gap represents both the difficulty of the problem and the size of the opportunity.

Three trends will shape the next 12-18 months:

- Confidentiality as a buying criterion. The Heppner ruling and the EU AI Act (effective August 2, 2026) will force legal teams to ask the architecture question before evaluating features.

- Playbook integration as table stakes. Generic AI suggestions are useful for one-off reviews, but they do not scale. Enterprises will require tools that enforce organizational standards.

- Honest accuracy benchmarking. The Vals Legal AI Report found lawyers achieve roughly 79.7% accuracy on redlining tasks, while AI tools ranged from the mid-50s to 90%+. Vendors claiming accuracy without methodology will lose credibility.

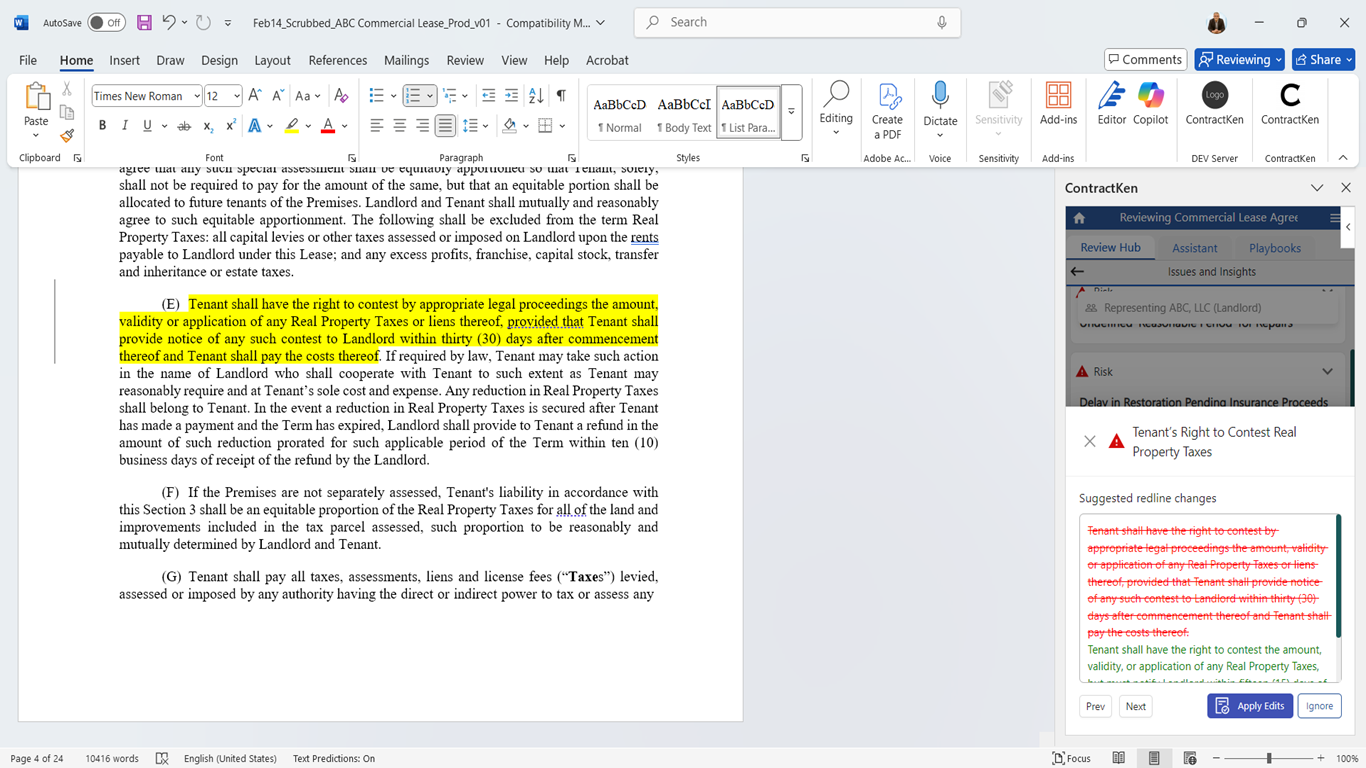

ContractKen is a Microsoft Word add-in that runs inside the document where lawyers already work. The redlining workflow is built around three design principles:

The Lawyer Stays in the Driver's Seat

All redline suggestions appear in a task pane alongside the document. Each suggestion shows the flagged clause, the playbook position it falls below, the suggested replacement language, and an explanatory comment. The lawyer reviews each suggestion, navigates to the clause location, and applies the change with a single click. The tracked change appears exactly as if the lawyer had typed it. The counterparty sees standard Microsoft Word tracked changes and comments.

Playbook-Driven, 100% Coverage

ContractKen reviews every clause against your organization's defined positions. Each contract type gets its own playbook with 20-30 clause topics, each carrying Preferred, Acceptable, and Walkaway positions. (See our Playbooks page for how this works.) The system catches 100% of deviations from your playbook, including clauses split across multiple sections. If an indemnification obligation has its core commitment in Section 5, exceptions in Section 9, and caps cross-referenced in Section 14, ContractKen detects all three and lets you manage them as a single topic.

Confidentiality by Architecture

ContractKen's Moderation Layer pseudonymizes contract text before it reaches the AI provider. Party names, dollar amounts, and identifying details are replaced with consistent placeholders. The mapping table stays in your environment. For the full analysis, see: AI and the Loss of Privilege: US v Heppner.

See playbook-driven redlining in action on your own contracts. Bring a third-party agreement. We will run it against a playbook and walk through the results together.

Can AI replace lawyers in contract redlining?

No. AI accelerates clause detection, position assessment, and language generation. The judgment calls, which deviations to fight for, which to concede, how to sequence negotiations, remain with the lawyer.

How accurate is AI contract redlining?

It depends on the tool and contract type. The Vals Legal AI Report found human lawyers achieve roughly 79.7% accuracy, with AI tools ranging from the mid-50s to over 90%. Ask vendors for accuracy data on your specific contract types.

What types of contracts can AI redline?

Most tools handle NDAs, MSAs, SaaS agreements, service agreements, employment contracts, and procurement agreements. Performance varies on specialized contracts. Playbook-driven tools can handle any contract type for which you define positions.

How long does AI redlining take?

AI analysis typically completes in 1-5 minutes. Total time including lawyer review varies. Teams report reducing initial pass review from 40+ minutes to under 10 minutes for standard agreements.

Can I use ChatGPT or Claude directly for redlining?

You can, with serious limitations. General-purpose AI tools do not integrate with Microsoft Word tracked changes, cannot reference your playbook, and receive your full contract text without pseudonymization. For professional use on client matters, purpose-built tools with confidentiality architecture are the responsible choice.

What is the difference between AI redlining and AI contract review?

Review identifies issues and produces findings. Redlining proposes specific replacement language as tracked changes. Review tells you what is wrong. Redlining tells you what to write instead.

How do I set up a playbook for AI redlining?

Start with one or two frequently negotiated contract types. Identify 20-30 clause topics, define three positions for each (Preferred, Acceptable, Walkaway), add approved clause language and guidance notes. Most teams complete their first playbook in 2-3 workshops.

"The right AI redlining tool does not replace your judgment. It makes sure your judgment is applied consistently, every time, on every contract."

Sources

- ABA Model Rule 1.1 - Competence, American Bar Association

- United States v. Heppner, No. 24-cr-00475 (S.D.N.Y. Feb. 10, 2026)

- ABA Formal Opinion 512: "Generative AI Tools" (July 2024)

- ACC/Everlaw, "Generative AI and the In-House Legal Function" Survey (2025)

- Juro, "Legal AI Adoption Survey" (2025)

- Vals AI, "Legal AI Report: Benchmarking AI Accuracy in Contract Tasks" (2025)

- EU AI Act, Regulation (EU) 2024/1689, high-risk provisions effective August 2, 2026

- Wolters Kluwer, "Future Ready Lawyer 2026" Survey

Sharma, Amit. "AI Contract Redlining: What It Is, How It Works, and How to Evaluate the Tools." ContractKen Blog, April 2026.

![Validate my RSS feed [Valid RSS]](valid-rss-rogers.png)

.avif)